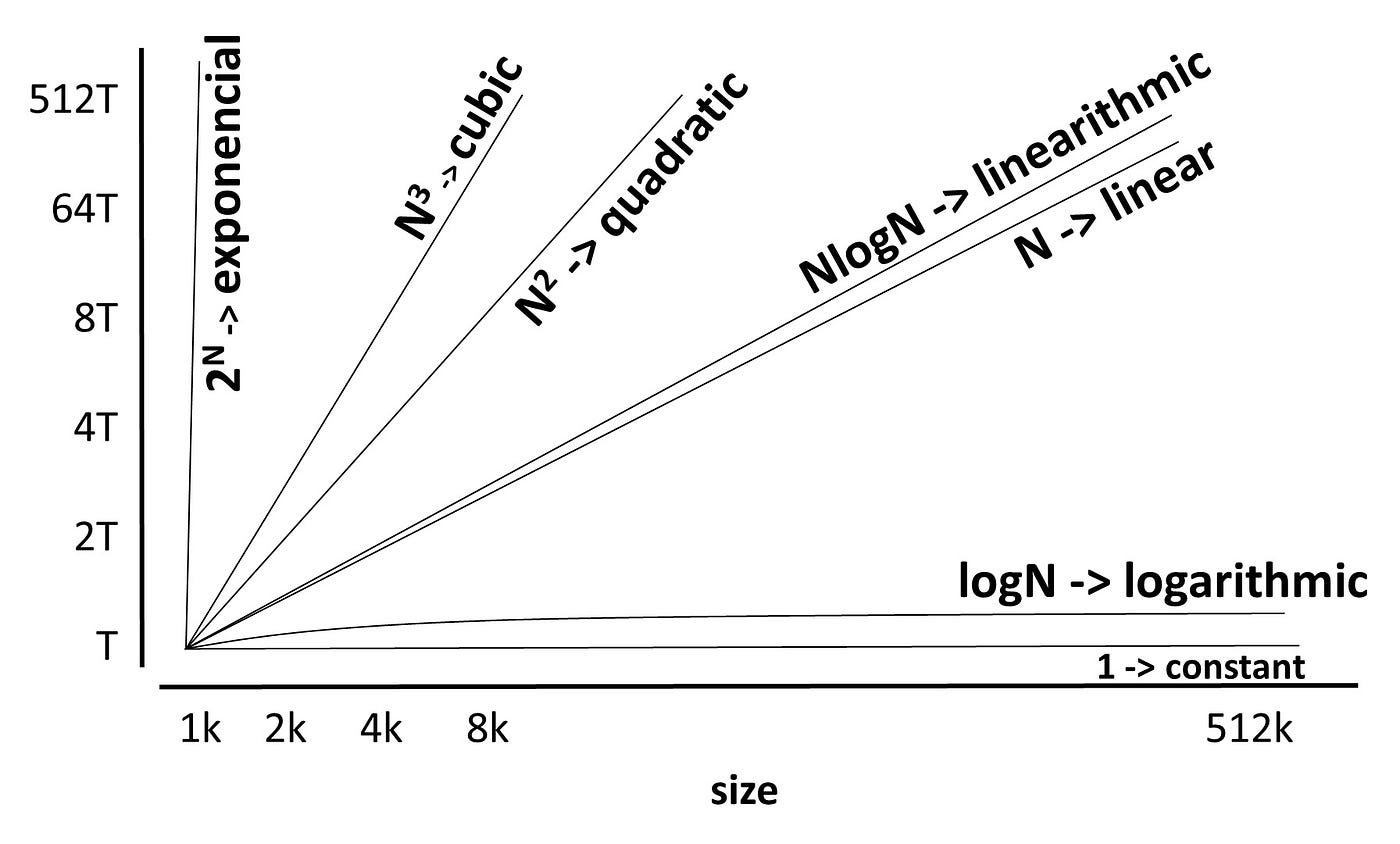

Big O Notation is a mathematical notation used in computer science to describe the complexity of an algorithm. It describes the performance or complexity of an algorithm in terms of the number of computations it takes. Big O notation is used to measure the time and storage complexity of an algorithm, allowing for comparisons between two or more algorithms.

Big O notation is represented using the letter “O,” and is followed by a parameter n. The parameter n represents the length of the algorithm’s input. The letter “O” is also known as “Order of” the algorithm’s performance with respect to the input size. For example, the notation O(n) means “the algorithm has an order of n time complexity” or “its running time increases linearly with the input size.” This means that when the input size grows, the number of computations that the algorithm needs to perform increases proportionally.

Big O notation is useful for quickly comparing algorithms based on their time and space complexity. By analyzing the complexity of competing algorithms, developers can determine which one is most efficient and choose the most suitable one for their project.

Big O notation is often used in connection to Big Theta and Big Omega notation. Big Theta notation is similar to Big O notation but is more precise as it only denotes the lower and upper bounds of the performance of an algorithm. Big Omega notation is used to denote the lower limit of an algorithm’s performance, and is rarely used alone.

Big O notation is a powerful tool for analyzing the time and storage complexity of algorithms and for quickly comparing the efficiency of competing algorithms. As such, it is used widely throughout the field of computer science.