Collinearity in Regression Analysis is a situation where two or more predictor variables are highly correlated. It is commonly seen when dealing with multiple regression analysis; however, it can also occur in simple linear regression analysis. When it occurs, it can lead to inaccurate interpretations of the regression analysis, and significantly reduce the power of inferences drawn from it.

Collinearity occurs when multiple predictor variables are related to each other in such a way that they can be expressed as linear combinations of each other. In terms of regression analysis, collinearity is a problem because it reduces the accuracy of coefficient estimates, and may cause the model to become unstable, or even fail to converge.

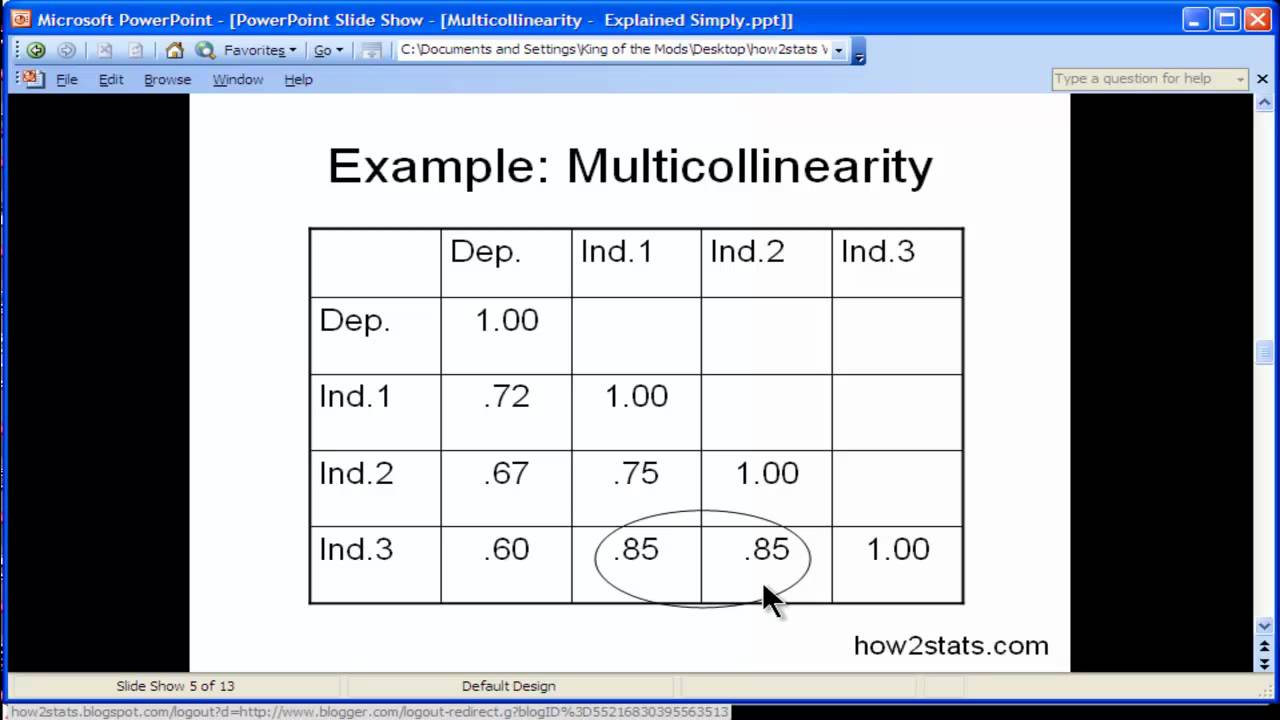

To detect and measure the degree of collinearity, several different criteria can be used. The two most commonly used criteria are the correlation coefficient, which measures the linear correlation between two variables, and the Variance Inflation Factor (VIF), which measures the degree to which the variance of the coefficient estimates is larger than expected without collinearity. A VIF value greater than 10 suggests that there is strong collinearity between the variables.

When collinearity occurs in a regression analysis, there are several approaches to mitigating its effects, such as using ridge regression, principal components analysis, or using other techniques such as best subset selection.

Overall, understanding collinearity and its consequences is important when doing linear regression. If not properly taken into consideration, it can lead to inaccurate interpretations of the regression analysis and significantly decrease the power of its inferences.